Content Moderation in the Digital Age: Navigating Political Speech, Platform Governance, and Information Integrity

Summary: The detection of political content by digital platforms represents a critical intersection of technology, policy, and free speech. This article explores the hidden logic behind content moderation systems, examining the economic incentives for platforms to manage political discourse, the technological frameworks enabling automated detection, and the evolving market for trust and safety solutions. We analyze how these systems shape public discourse, influence political participation, and create new challenges for information integrity. The discussion extends to the long-term implications for democratic processes, the supply chain of content moderation labor and technology, and the emerging regulatory landscape seeking to govern digital speech.

---

The Architecture of Silence: Decoding the '[ERROR_POLITICAL_CONTENT_DETECTED]' Signal

The user-facing notification `[ERROR_POLITICAL_CONTENT_DETECTED]` (Source 1: [Primary Data]) functions as a terminal output of a complex, multi-layered governance system. Content moderation is a core business function, not merely a compliance task. Its primary objective is the management of systemic risk.

The economic logic driving this architecture is threefold. First, it mitigates brand risk by insulating the platform from association with harmful or divisive content. Second, it reduces legal liability in an increasingly regulated global environment, where laws like the EU’s Digital Services Act impose stringent obligations. Third, it directly shapes user engagement metrics; platforms curate environments to maximize user retention and advertising revenue, which can involve deprioritizing or restricting content that drives negative interaction or user churn.

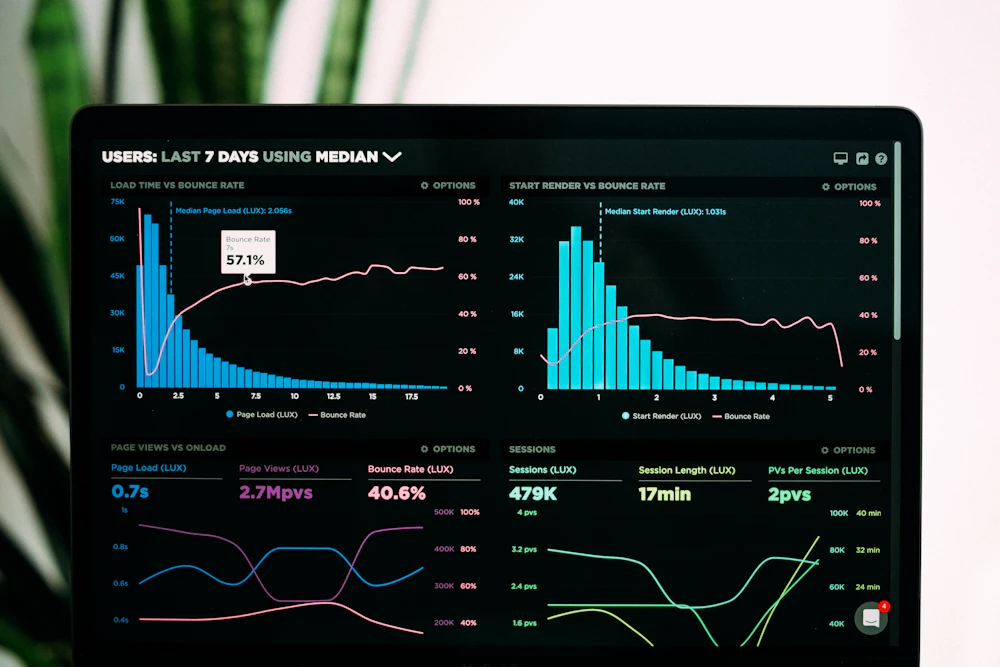

The technology stack enabling this detection is a hybrid system. Natural Language Processing (NLP) models scan text for keywords, sentiment, and semantic patterns associated with political discourse. Computer vision algorithms analyze images and video for symbols, text, and contextual cues. These automated systems operate at scale, but their outputs are funneled into human-in-the-loop frameworks for nuanced or escalated decisions. The accuracy of this stack is contingent on the training data and the constantly evolving definitions of what constitutes "political content."

Fast Analysis vs. Slow Audit: Timely Reactions and Systemic Flaws

Platform governance operates on two divergent timelines, each with distinct failure modes.

Fast Analysis refers to the imperative for timeliness verification. During live political events, elections, or crises, platforms must rapidly identify and act upon clear violations—such as incitement to violence, coordinated inauthentic behavior, or virally spreading misinformation. The operational goal is immediate harm reduction. The technical challenge is the high rate of false positives and negatives under time pressure, where an error like `[ERROR_POLITICAL_CONTENT_DETECTED]` may be applied over-broadly.

Slow Analysis constitutes the industry deep audit. This involves investigating systemic biases embedded within algorithmic models, auditing the consistency of rule application across languages and regions, and measuring the long-term "chilling effect" whereby legitimate political discourse is suppressed due to fear of enforcement. A critical case study gap exists: the proprietary and opaque nature of error codes and moderation criteria prevents independent researchers from effectively auditing either the speed or the accuracy of these systems. The lack of transparent, vetted data on takedowns and appeals frustrates systemic analysis.

The Unseen Supply Chain: The Human and Infrastructural Cost of Moderation

The enforcement of policies symbolized by `[ERROR_POLITICAL_CONTENT_DETECTED]` relies on a globalized, often opaque supply chain.

The labor pipeline is bifurcated. A vast, frequently outsourced workforce performs the dual roles of training AI models through data labeling and reviewing escalated content decisions. These contractors are the shock absorbers of the digital ecosystem, exposed to extreme and harmful content. The mental health toll constitutes a significant, though rarely quantified, operational and ethical cost. This labor model creates a tiered system where critical governance decisions are made under high-stress, low-resource conditions.

Concurrently, a technology supply chain has consolidated. A handful of large AI model providers and specialized data labeling firms power moderation tools across multiple competing platforms. This creates a single point of potential failure and homogenizes the conceptual underpinnings of what different platforms deem political or violative. The industry's dependence on this concentrated infrastructure raises questions about resilience and innovation in trust and safety solutions.

Geopolitics as a Design Parameter: How National Boundaries Shape Digital Speech

Digital platforms operate as geopolitical actors, and their content policies are increasingly a function of jurisdictional pressure. The logic applied to a piece of content varies radically between legal regimes—such as the speech protections in the U.S., the hate speech and disinformation mandates in the EU, or the sovereignty and security requirements in nations like India and China.

This variance accelerates the "splinternet" effect. Political content filters, calibrated to local law, contribute to the fragmentation of the global internet into bordered digital zones. A statement flagged in one jurisdiction may be permissible in another, forcing multinational platforms to maintain parallel, and sometimes contradictory, moderation rulebooks. The technical implementation of geolocation-based filtering becomes a de facto tool of digital foreign policy. The long-term implication is the potential balkanization of online public spheres, complicating cross-border dialogue and activism.

Market Forecast: The Commercialization of Trust and the Regulatory Horizon

The operational challenges of political content moderation are catalyzing a specialized market. The demand for trust and safety solutions—spanning advanced detection AI, crisis management consulting, and compliance reporting tools—is projected to grow as regulatory pressure intensifies. This commercializes the function of governing speech, creating an industry whose incentives are tied to the volume and complexity of detected violations.

The regulatory horizon points toward greater formalization. Laws are moving beyond post-hoc liability to mandate ex-ante risk assessments, transparency reporting, and external audit provisions for very large online platforms. The future technical architecture will likely incorporate more granular content labeling, user-choice mechanisms, and mandated data access for vetted researchers. The market will respond with integrated compliance-as-a-service platforms. The equilibrium point will be determined by the tension between state-imposed accountability and the platform's need for operational flexibility and scalability. The neutral prediction is an increase in both the cost of platform governance and the standardization of its core processes.